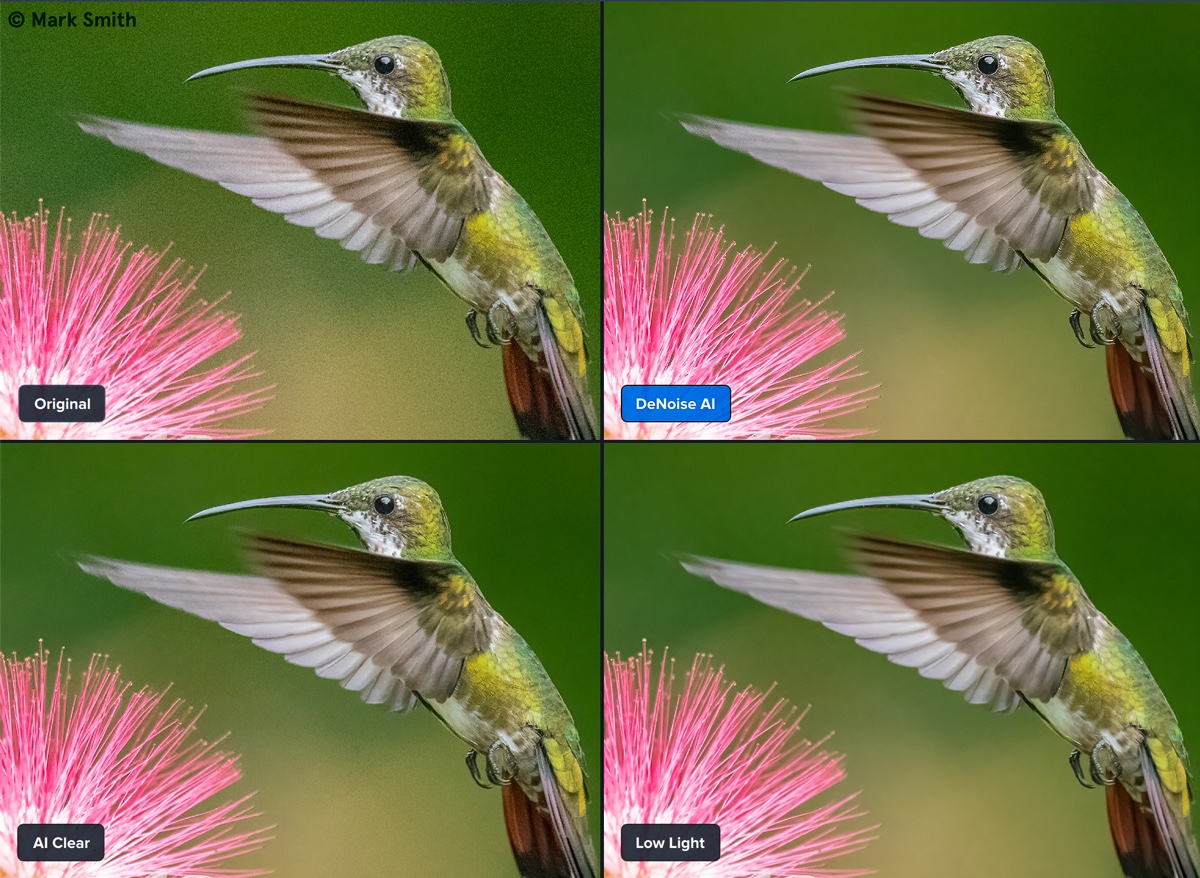

Once interpolated, the debayer algo (AHD) leaves more structured noise which is much more difficult to eradicate. One thing I did find was that the denoise worked much better on pure pixel noise - i.e., RAW. The relevant files are all there on the drive if you want to look deeper. NOTE: training the model takes a long time, but once you have it, denoising an image takes only 2-3 secs.Īs above, but comparing the 300 epoch results with low-ISO version. For the 6d - which is relatively low noise anyway - there was a small improvement over the 30 epoch quicky version, but I was gobsmacked how well it worked on the very noisy G9 image. Went out climbing this morning, so left the computer training a 300 epoch model (Canon). The compares the native model result with a low-ISO (ISO1600) version of the same image (a sort of ground truth). It looks like the result are noticeably better with the native model. I initially ran the training for a minimal 30 epochs (~30 mins) on the 6d and the G9 images and then ran the images through their 'native' and 'foreign' network models. I've put the results on.Ĭompare-h.jpg (100%), compare-h2.jpg (400%) So I generated some noisy ISO25600 images on both my full-frame Canon 6d and my m4/3 Lumix G9, battled with installing all the right versions of ML libraries (another story) and did some testing. I found a derivative algo called Noise2Void which is simpler to train and also is available as a plugin to ImageJ. The code is available, but a bit awkward to use. I believe Topaz denoise is based on an ML algorithm called Noise2Noise. My interest was peaked by this tread, so being too stingy to invest in Topaz, I had a dig into the similar open source codes. That doesn't work so well if you have foreground though - the foreground isn't affected as much by airglow. Of course for most DSO objects you'll subtract out the airglow in the workflow, so it won't be obvious. Show a green sky to most people and they'll say "Nah, that's wrong!" But that's the true colour of airglow. * The most obvious example is if you take colour balanced image of a proper dark sky, most people expect it to be dark blue (in so far as it has any colour at all).

If you are using any filters like UHC or pollution filters, or a modded camera, then all bets are off - choose whatever white balance you like, they won't be 'true' colours in any normal sense of the word. Target a suitable star field, look up the expected colours in say Stellarium and then adjust your red/blue multipliers until they are as close as you want.

If you want accurate, then assuming you are not using any filters, I tend to think the best way is to use star colours to calibrate your lens/sensor. In reality most amateur astrophotographs are more art than science. OK, first you have to decide: Do you want the colours to be accurate or do you want it to look nice/interesting? They are not always the same*.

If you are photographing a landscape, not DSO, how do you choose WB?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed